The latest announcements from OpenAI and Anthropic mark another important step forward for frontier AI. They also reinforce something we’ve believed at SentinelOne for years: the future of cybersecurity will be shaped by AI-native defense.

SentinelOne has worked closely with frontier labs for years, including OpenAI, Anthropic, and Google DeepMind, and naturally continues to do so. While we cannot always share the specifics of every collaboration, these partnerships have provided, and continue to provide meaningful insight into how advanced models are evolving and where they can create real impact across security. Many of these learnings and capabilities are already embedded in our platform, protecting customers from the most advanced attacks – every day, stopping zero day exploits no other solution is currently able to.

What stands out most is not simply that frontier models are becoming more capable, but that they are accelerating the broader shift toward faster, more intelligent, and more automated security operations. On the one hand, they are improving how the cyber industry and defenders identify weaknesses, analyze complex systems, and reason about attack paths at scale. On the other, they are giving attackers the advantage of speed and scale when it comes to finding new vulnerabilities. Progress in this race matters, but it is only one part of the broader security picture.

In practice, and without discounting the severity of uncovering exponentially more bugs in software, raw vulnerability counts rarely map cleanly to real-world risk. Many vulnerabilities are not meaningfully exploitable in live environments, and many are already reduced by architectural layers, controls, mitigations, and runtime protections. The gap between theoretical exposure and operational risk is often substantial. What matters most is the ability to understand real conditions, prioritize what matters, and stop actual attacks across complex environments, even when faced with novel threats and zero days.

That has been SentinelOne’s pioneering principle and the advantage we’ve delivered to our customers from the beginning.

From day one, SentinelOne was built to operate at machine speed, using behavioral AI, automation, and autonomous protection to detect, defend, and respond across endpoint, cloud, identity, data, network, and AI attack surfaces. As frontier AI continues to advance, the value of that approach only grows. To demonstrate our commitment to these principles, we’d provide two distinct examples.

First, in the last few weeks alone, the benefit of such an approach has played out in supply chain attacks, like LiteLLM, Axios, and CPU-Z, all illustrative of novel threats and the risk of trusted agents and workflows in the AI era. In each case, autonomous response at machine speed was the only antidote to block these novel threats that leverage unpatched, or zero day vulnerabilities.

Second, SentinelOne demonstrably expanded our own ongoing efforts to secure our technology. Along with the standard, established efforts we’ve used for years, SentinelOne has used multiple, AI-driven models to constantly examine our technology and architecture in techniques virtually identical to those discussed in Anthropic’s technical details for researchers and practitioners released April 7th 2026 (Assessing Claude Mythos Preview’s cybersecurity capabilities). This activity has been ongoing for months and is also consistently reviewed for findings as well as evaluated as a program by the SentinelOne executive team. It is our commitment to build and deliver secure technology and we do not see an effective future in this work without robust AI-driven methods, and an inclusive, multi-model approach.

As we look at the overall AI landscape, the shift is already underway, and it plays directly to SentinelOne’s strengths. The industry is moving toward more autonomous, more adaptive, and more intelligence-driven security. That is the future we helped pioneer, and one we are uniquely positioned to lead.

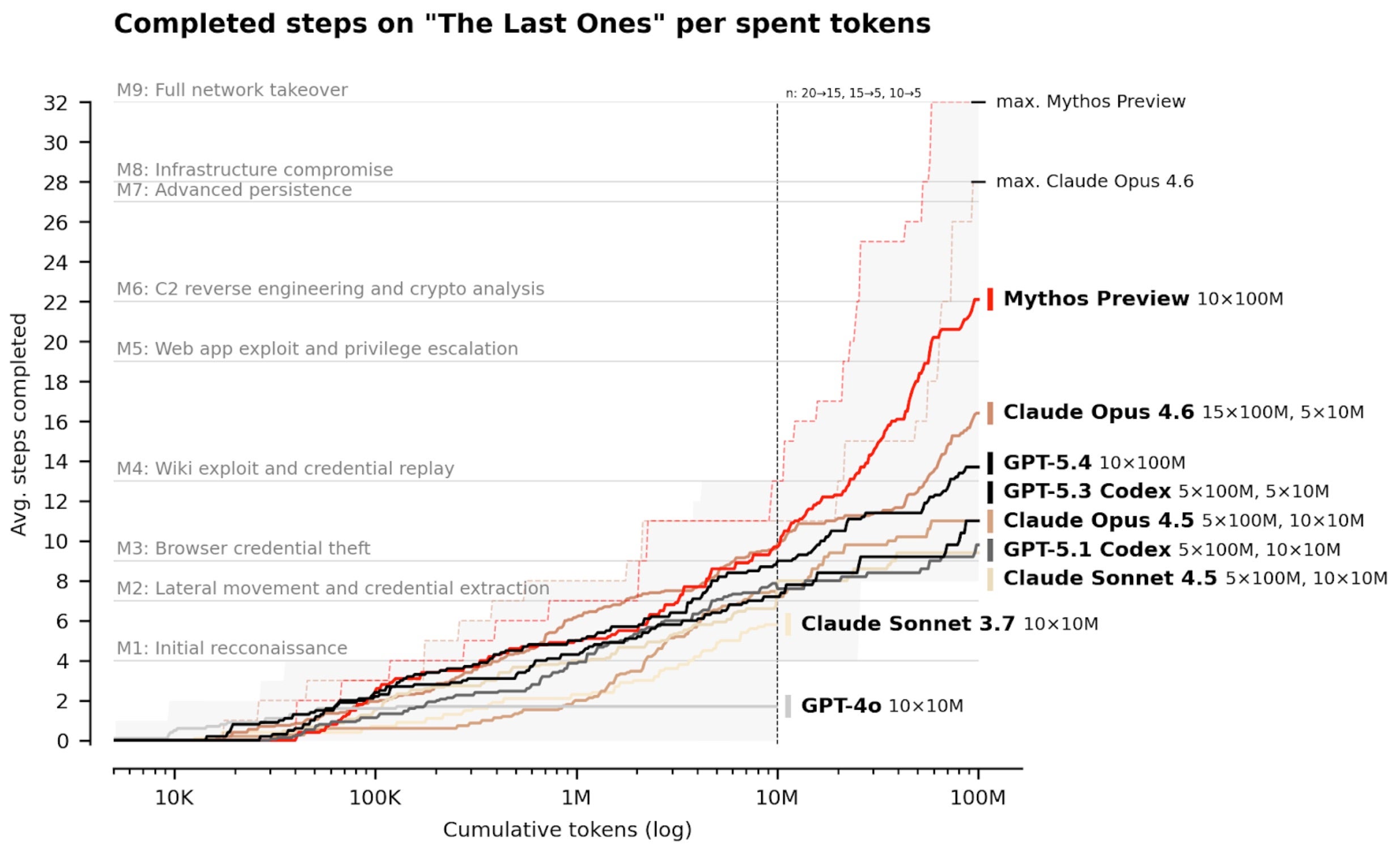

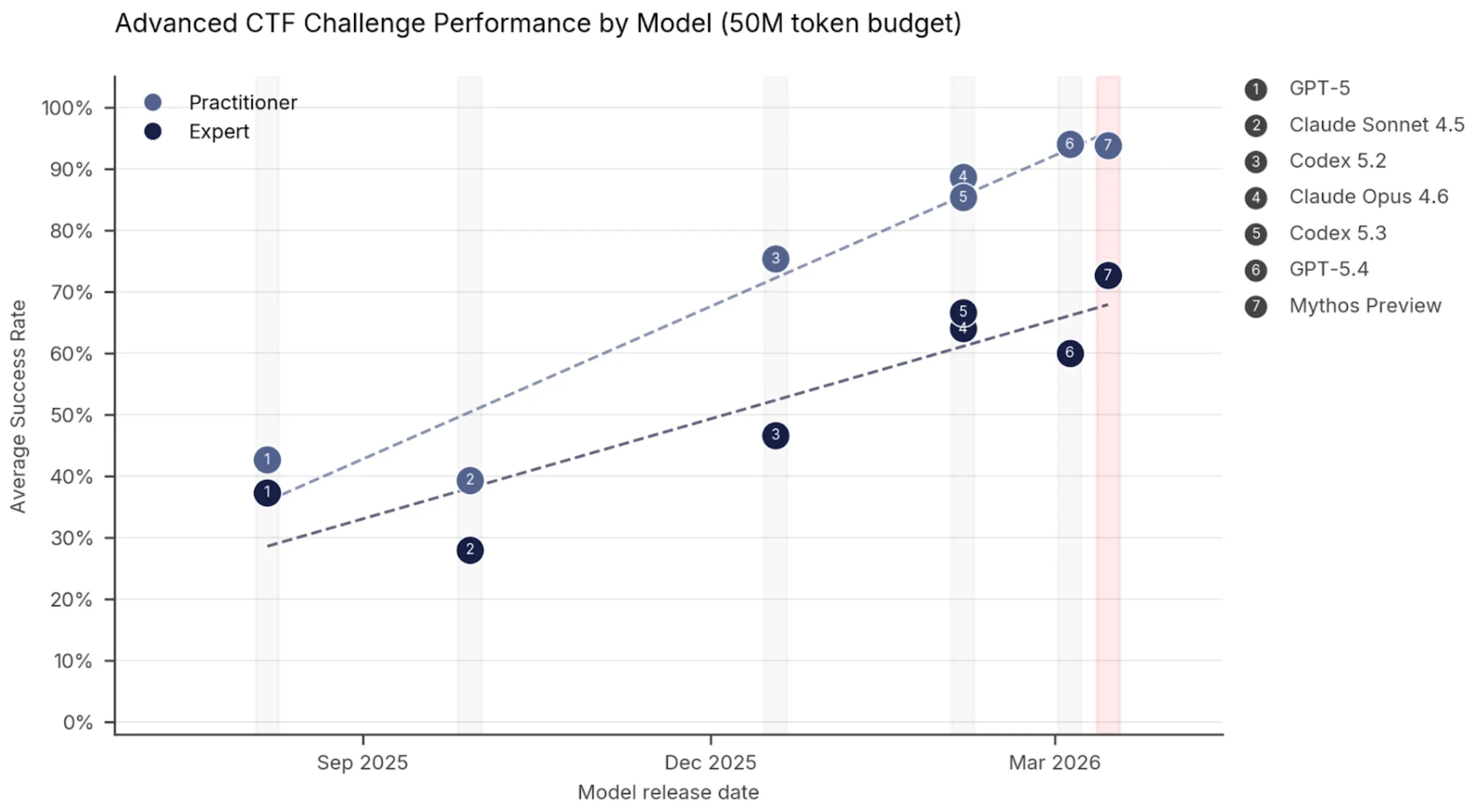

Our clear advice to defenders: Invest in machine speed defense and visibility right now. Ensure your defenses are up to date and well configured. Ground yourself in true research, not in pompous press releases and vacant claims. As an example, many of the press and information shared by third parties around Anthropic’s new model release have lacked any substantive data – in many cases those statements preceded any real, tangible experience with the preview models in question. Inversely, the AI Security Institute (AISI) released a detailed research evaluation of relevant models, which sheds light on the state of frontier AI, exploitation rates, and potential real world implications. It clearly shows the trajectory, even from older models, had been apparent for a while, and that capability has existed and in many cases has been a function of compute scaling, as well as potentially the result of looser guardrails allowing more effective compute and reasoning than guardrailed models:

The AI Security Institute also goes forward and outlines the following implications:

“Mythos Preview’s success on one cyber range indicates that it is at least capable of autonomously attacking small, weakly defended and vulnerable enterprise systems where access to a network has been gained. However, our ranges have important differences from real-world environments that make them easier targets. They lack security features that are often present, such as active defenders and defensive tooling. There are also no penalties for the model for undertaking actions that would trigger security alerts. This means we cannot say for sure whether Mythos Preview would be able to attack well-defended systems.

In a regime where attackers can direct and provide network access to models to conduct autonomous attacks on poorly defended systems, cybersecurity evaluations must evolve. As capabilities continue to improve, evaluation environments that lack defenses will no longer be challenging enough to discriminate between the capabilities of the most cyber-capable models or assess trends. Our future work will involve evaluating capabilities using ranges simulating hardened and defended environments, including ranges with active monitoring, endpoint detection and real-time incident response. We will also be tracking how AI-enabled vulnerability discovery and penetration testing campaigns perform on real-world systems.”

Stay safe,

The SentinelOne team